Contents of Articles:

- Introduction

- How Peripheral Computing works

- The Periphery And The Data Center Advantages

- Internet of Things And Peripheral Devices Integration

Introduction

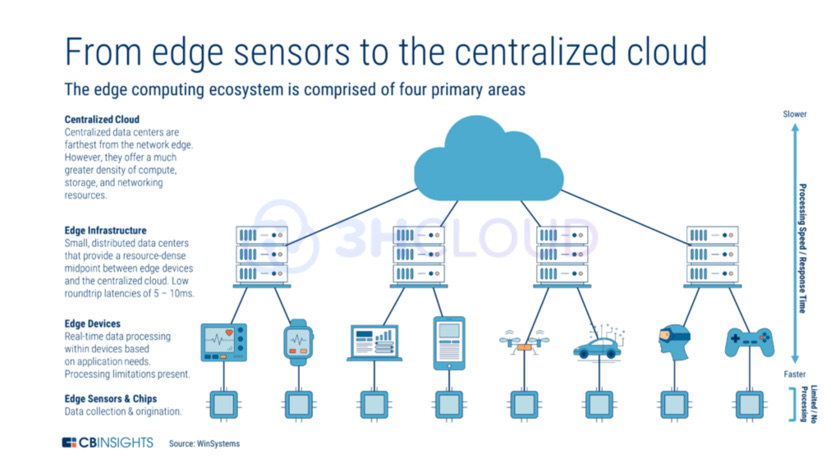

The concept of peripheral computing involves constructing a hierarchical IT infrastructure, whereby resources are distributed away from the central data center and towards edge computing. This decentralized approach places computing resources in close proximity to the origin of primary "raw" data, allowing for initial processing to occur before being transferred to a higher computing node. Instead of centralized data centers, data collection and analysis take place at the source of data generation, where data flows are actively generated.

How Peripheral Computing works

The concept of this technology is similar to a distant telecommunications facility. Its purpose is to bring computing resources nearer to the location where data is gathered.

In terms of innovation, the industrial automation industry is at the forefront of several emerging technologies. These technologies are the following:

- augmented reality;

- artificial intelligence;

- cloud-based dispatch control;

- data acquisition systems;

- programmable automation system controllers.

The integration of automation technologies is evident in the widespread connectivity of the Internet of Things (IoT) and its utilization of intelligent sensors. This connectivity extends to the Industrial Internet of Things, which offers valuable insights for product maintenance, inventory management, and transportation.

Yet, simply establishing network connections and managing data flow are insufficient to fully harness the potential of digital transformation. Manufacturers must fully embrace industrial automation to enhance their competitiveness. This entails leveraging the collected data within the IoT ecosystem to generate valuable analytics, and enables faster, more accurate, and cost-effective decision-making. To accomplish this, computing power must be decentralized and brought to the boundary of the network.

Implementation scheme of edge computing

The Periphery And The Data Center Advantages

The main objective of edge computing is to relocate computing resources, typically housed in a highly scalable cloud data center that may be at a considerable distance, to the "edge" of the network. This strategy aims to minimize network latency and enhance computing capabilities by processing data closer to its origin. By utilizing a peripheral network, mobile applications can leverage artificial intelligence and machine learning algorithms to a greater extent. Presently, these applications heavily rely on the computing power of mobile processors, which often leads to faster battery drain, resulting in complex calculation tasks.

- Peripheral computing serves as an accelerator for IoT, offering numerous advantages and potential opportunities.

- Data analysis and filtering can occur in close proximity to the sensors by conducting calculations on the peripherals. This minimizes unnecessary data transmission to the cloud, ensuring only relevant information is sent.

- In certain cases, time is of the essence in the production process. Achieving a quick response time in milliseconds is vital for guaranteeing safe and precise operations. Relying on the IoT cloud platform for results can cause unacceptable delays.

- Peripheral calculations allow for data to be processed in a location that is shielded against direct network connections. Consequently, this provides heightened control over the security and confidentiality of sensitive information.

- The need for extensive cloud data storage capacity and network bandwidth is reduced when utilizing peripheral computing. This leads to reduced costs as a substantial amount of data can be processed directly at the edge.

Edge computing has emerged as a prominent architectural approach, enabling the concentration of various computational tasks. It offers several key benefits, such as reduced network latency in data processing and efficient handling of massive data volumes. However, it is not without its flaws. Inadequate protocol stack interoperability and a lack of standardization pose significant challenges. Consequently, devices and applications operating at the network's boundary exist as separate and independent Edge ecosystems.

Edge computing architecture brings calculation resources closer to data and devices. Experts see it as a key paradigm beyond cloud calculations. There are some digital scenarios which require extremely low latency. However, the existing variety of interfaces and the lack of industrial standards greatly slow down progress. It deprives devices and apps of the ability to interact with each other.

Internet of Things And Peripheral Devices Integration

Initially, organizations developed strategies for deploying the Internet of Things on the edge computing systems and managing it. But edge computing is everywhere now. Companies deploy edge computing calculations. So, they face new problems in IoT.

Here are five areas that may cause some confusion, as well as recommendations for IT teams on what they can do now to be better prepared.

IoT and edge computing integration.

There are several levels of integration where the IoT and edge computing create problems. The first level is the integration of IoT and edge computing with basic IT systems deployed in production, finance, engineering, and other areas. Many of these basic systems are old. If they do not have APIs that provide integration with Internet of Things technology, then ETL package software (extraction, transformation, loading) may be required to upload data to these systems.

The second aspect concerns the IoT itself, where numerous independent vendors construct their own IoT devices with individual operating systems, creating challenges in integrating different devices within a single edge computing architecture. Governments now recognize the need to standardize security measures and regulatory compliance for IoT providers in order to conduct business. Thus, the implementation of consistent IoT standards could streamline the integration process.

Although the security and compliance requirements for IoT regulations are still in progress, they are expected to improve in the coming years. IT professionals can already inquire with IoT providers about available integration options and their plans to ensure compatibility with future products.

Regarding the integration of older systems, utilizing ETL (Extract, Transform, Load) methods can be beneficial if system APIs are unavailable. Alternatively, developing an API specifically for integration is possible, albeit time-consuming. Encouragingly, vendors of older systems are showing interest in IoT applications and are actively developing their own APIs. Thus, IT departments should engage with primary system vendors to understand their plans for IoT integration.

Security

New laws on cybersecurity should make compliance with the security demands of the Internet of Things easier. However, this will not solve the problem that end users who are inexperienced in security administration will most likely be responsible for monitoring edge computing and its security. It is important to train end-user employees to protect the calculation processes in the IoT. Access is another important security issue. It is recommended that only authorized employees who have been trained on internal security issues should be allowed into the security zones of the Internet of Things.

Support

It is necessary to support the IoT networks, while addressing issues of device failure, security, software updates, or adding new equipment on a daily basis.

End users can monitor those parts of the edge computing Internet of Things that are directly related to their operations. It is reasonable to take over general maintenance and support for IT-departments.

First of all, you need to make sure that you are aware of what is happening. End users are turning directly to vendors to purchase and install the IoT for their operations due to the growth of shadow IT infrastructure.

IT professionals can use corporate calculation resources and asset software that identifies something new to detect these new additions. However, IT companies and users can agree on the types of local support.

Survivability

It is important to use solutions that can be self-sufficient and require minimal maintenance for long periods of time when the Internet of Things is in dangerous or hard-to-reach areas.

IoT that works in conditions of extreme heat or cold or in harsh working conditions must adhere to the requirements.

It may also be important to find IoT solutions that can support themselves for long periods of time without the need for replacement or constant maintenance. It is common for Internet of Things devices to have a life cycle of 10 to 20 years. The frequency of maintenance of such devices and sensors can be increased if they can be powered by solar energy (and, therefore, depend less on batteries), or if they are activated from sleep mode only when movements or other events are detected (for their monitoring).

It is necessary to include survivability as one of the requirements for peripheral Internet of Things solutions to minimize maintenance and field inspections.

Bandwidth

Most IoT devices are cost-effective in terms of bandwidth usage. However bandwidth availability will decrease because it will be necessary to do more calculations due to new IoT devices. So, the ability to work in real time will be compromised.

A preferred method for implementing distributed IoT systems is through using edge computing, where companies can utilize local communication channels. Data of value can be transmitted either at the end of the day or periodically throughout the day to centralized data collection points within the enterprise, regardless of whether these points are on-premises or in the cloud. This distributed computing approach not only reduces the need for long-distance communication bandwidth but also allows for data calculations to be conducted during less busy and more cost-effective periods.

It is crucial to involve data architects and the business end in determining the type of IoT data to be collected. This involvement can help reduce data workloads, bandwidth usage, and costs associated with data processing and storage by eliminating unnecessary data that is not relevant to the business.