Comparison of desktop and enterprise graphics cards. It is about the conditions and tasks in which it demonstrates maximum efficiency.

Basic differences

Desktop graphics cards come in laptops and personal computers. Their functions are more suitable for fast gaming and private graphics work.

Enterprise graphics cards sometimes don't even have connectors (HDMI, DVI) for video output. They don't always have their own version of DirectX, so you can't play on such devices.

Enterprise graphics cards help you do a lot of computing with less power consumption. Such graphics cards are used in ML development, video production, rendering and modeling of complex objects.

What else is the difference

You can talk about how desktop and enterprise hardware is similar or different on several conventional levels.In this article, we'll delve deeper into comparing various graphics cards, but to get a comprehensive understanding, more context is necessary.

Upper level. Production and productivity

Each desktop graphics card has many variants from different manufacturers: the Asus, Gigabyte and MSI. Both NVIDIA & AMD usually transfer technology to third-party manufacturers, so you can see a wide range of prices for the same video card manufacturers.

That said, NVIDIA and AMD always try to build enterprise graphics cards themselves and don't trust anyone with a license to manufacture such hardware.

The most important parameter affecting both productivity and durability is the operating temperature.

Graphics cards solve the cooling problem in different ways. Ventilation systems in data centers are capable of ventilating entire clusters, and a comfortable microclimate is maintained in the room with air composition monitoring. It's a challenging issue to repeat this action on a home rack, so enterprise graphics cards get hotter in such conditions – this is not surprising.

Average level. Drivers and software

The software and driver version can be found here. Desktop graphics cards are primarily designed to support a wide range of frame rates, especially for simple games and applications. Enterprise systems are characterized by stability figures when dealing with large projects. Using enterprise graphics card applications always creates an opportunity to scale and cluster graphics processors to handle large amounts of data.

Drivers from enterprise-level graphics cards don't mesh well with desktop hardware and vice versa.

Lower level. Architecture and Specification

At a lower level, we can talk about architectural differences in memory.

The enterprise graphics card memory is an ECC (Error-Correcting Code.) type. This memory heavily relies on the size and speed of the RAM system as it's continuously used for error correction."This saves time, as processes do not need to be restarted and the system runs stably.

There are two types of errors: memory and device. Moreover, the latter are quite common on desktop graphics cards.

Gaming graphics cards are less suitable for complex rendering precisely because of device errors resulting from electrical and magnetic interference in the computer.

Gaming graphics cards use non-ECC memory, which is slightly faster for simple objects, but performs worse, for example, when building ML experiments. That is, when you have to work with a lot of data and variables.

Let's move on to analyzing specific examples.

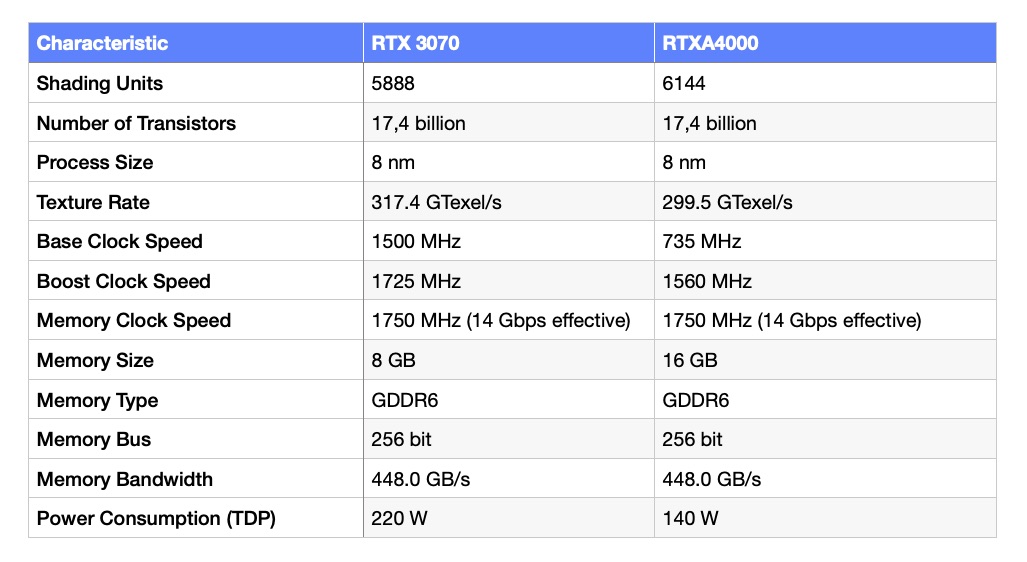

3070 VS A4000

RTX A4000 is a compact single-slot card, very similar to RTX 3070, but with a full chip and uncut cores.

Usually manufacturers of enterprise graphics cards do not pay much attention to the cooling system. This is due to the fact that data center systems are responsible for their cooling. RTX A4000 managed to achieve a relatively small temperature difference with RTX 3070. The difference under load is only 7-9 degrees.

Despite the fact that video cards of this class are designed for graphics and AI-related computing, they have also gained traction in the gaming segment.

The maximum 16GB of video memory proves that the A4000 will remain relevant for a long time to come. Perhaps the best option for working with animation in the middle segment.

Although the RTX A4000 is slightly ahead of its desktop counterpart in terms of the number of stream processors and maximum memory capacity, it's hard to pick a clear winner here.

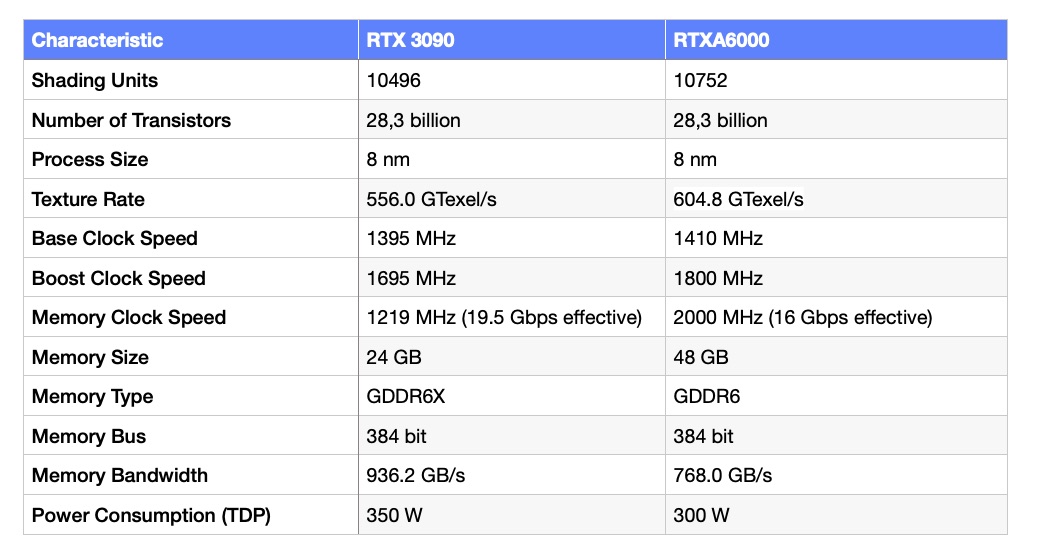

3090 VS A6000

The first thing to note when comparing these graphics cards is that they are based on the same GA102 chip. Some metrics are completely identical, so it's not surprising that the cards show virtually similar rendering times in the default Blender or Maya models. The difference does not exceed 1%.

This duo can be called a battle of the titans, as the best representatives of their pedigrees meet here. The graphics cards are similar in many ways, but the RTX A6000 is the only card in the enterprise list that surpasses its opponent in terms of texturing speed.

For more complex tasks, such as rendering entire film scenes in RedShift, the RTX A6000 can run twice as fast. The matter here is not just about the volume of video memory, but also about the automatic error handling.

If you combine the performance of two RTX 3090s using NVLink, you can theoretically achieve similar performance, but you can't save money with this design.

Buy or rent a video card?

The field of application of GPU servers is quite wide: photo and video rendering, building 3D models, processing and analyzing large data sets, statistical calculations, encryption.

The advantages of purchasing your own equipment can be debatable. It largely depends on the expected loads and operating conditions. It is quite expensive for companies and individuals who need GPU-based servers for hypothesis testing or machine learning experiments, for example. In this case, you will need to maintain your IT infrastructure yourself.

The issue doesn't stop at buying a graphics card. You need assembly, a case, a motherboard, CPU, drives, and you also have to pay for electricity.

Price and time

If you understand that the machine is needed for years to come for uncomplicated projects, it's worth considering the purchase scenario in more detail. Yes, you'll have to buy not only the card but also the components, assemble everything, and maintain it. However, this approach will allow for savings in the long run. For freelance designers, for example, it's a genuine investment in themselves and their projects.

If the project tasks involve session-based work or extensive computational processes, it's better to use a GPU in the cloud. This approach will help determine how many resources the project needs. If the initial assessment doesn't hold up, you can easily scale or optimize the project's IT infrastructure.