Improving Energy Efficiency in Data Centers

Discover strategies to minimize the environmental impact of data centers and optimize electricity costs.

The number of data centers has grown from 500 thousand to more than 8 million in just ten years. All of them consume about 3-5% of the planet's total electricity. As a result, each data center indirectly provokes greenhouse gas emissions into the atmosphere. This accounts for about 2% of global CO2 emissions. This is comparable to the emissions of the world's largest airlines.

It is in the interests of providers to consume energy efficiently, without unnecessary CO2 emissions. It helps to reduce the man-made impact of data centers on the external environment and optimize electricity costs. So, you have to monitor the PUE (Power Usage Effectiveness) value of your own data centers and improve it if necessary.

Energy Efficiency and PUE Indicator

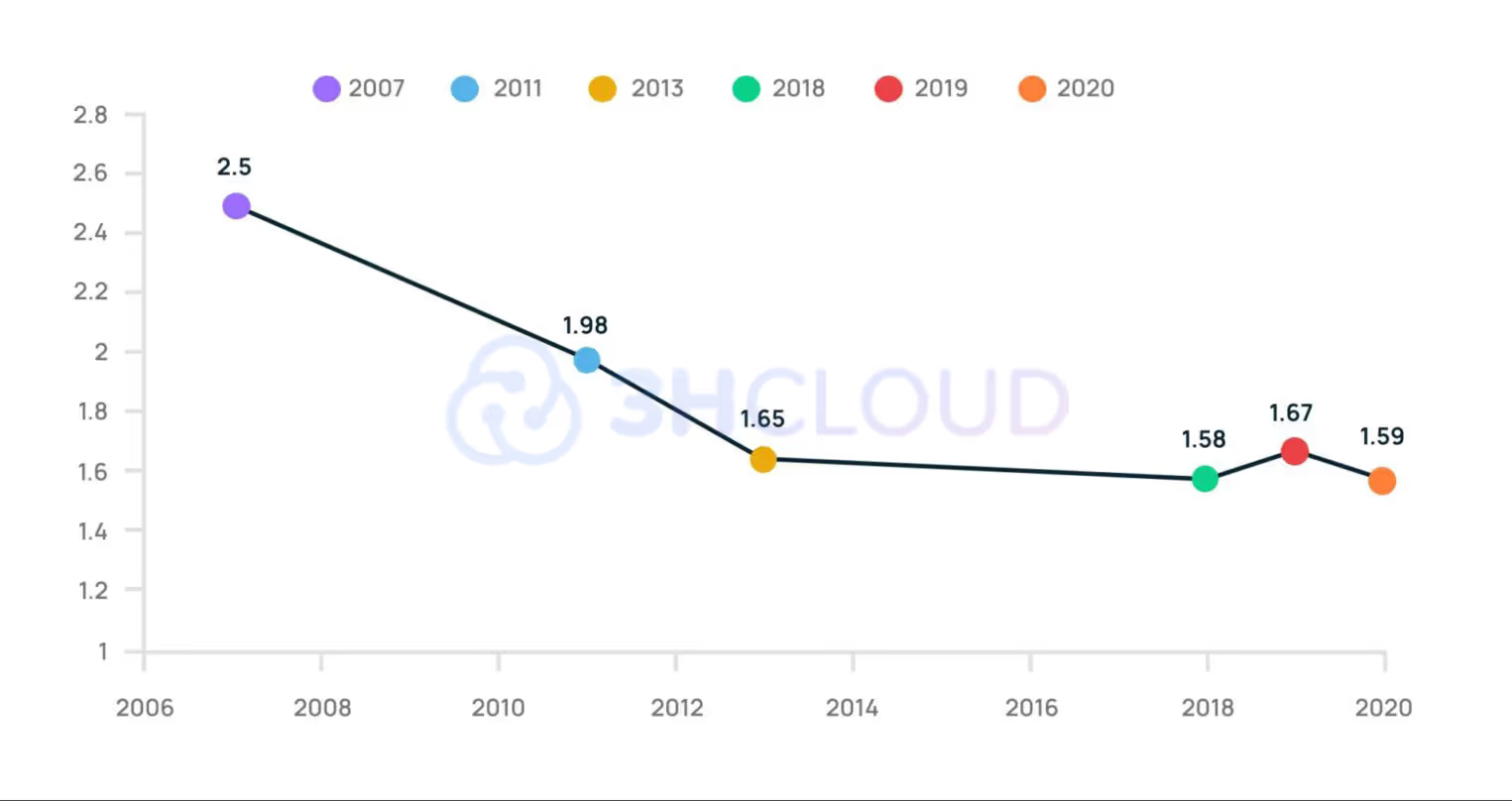

PUE (power usage effectiveness) is an indicator for evaluating the energy efficiency of a data center. The measure was approved by the members of The Green Grid consortium in 2007. PUE reflects the ratio of electrical energy consumed to the energy that is consumed by the equipment.

PUE was introduced not because of the desire to make the world more environmentally friendly. The financial factor was the reason for it. Electricity began to rise rapidly in price in the early 21st century. Therefore, providers started working on optimizing energy consumption.

Ways to Reduce PUE

Energy Efficiency is an experience that comes with time. Therefore, we will consider exactly what we managed to apply in our data centers.

Expansion of Temperature Ranges

The server equipment used to operate only within a specific narrow temperature range, requiring a lot of heat to be dissipated, which in turn demanded a significant amount of electricity. One solution is to increase the operating temperature range of the equipment and emit less heat.

For instance, a previously acceptable temperature was around 18–20°C, but now it's permissible for the temperature to reach 22–24°C. This is fine, as modern server equipment and Storage Area Networks (SANs) reliably operate up to 27°C. Moreover, there are devices today that can function correctly in air temperatures of up to 40°C. As a result, there's less need to adjust the temperature in data centers and more opportunity to utilize the ambient temperature. Thus, maintaining certain conditions in server rooms requires less electricity.

Chillers instead of Freon Air Conditioners

The PUE index of classical freon air conditioners is rather high in compliance with modern standards. It is typically 1.6–1.7, while the PUE of chillers is around 1.4.

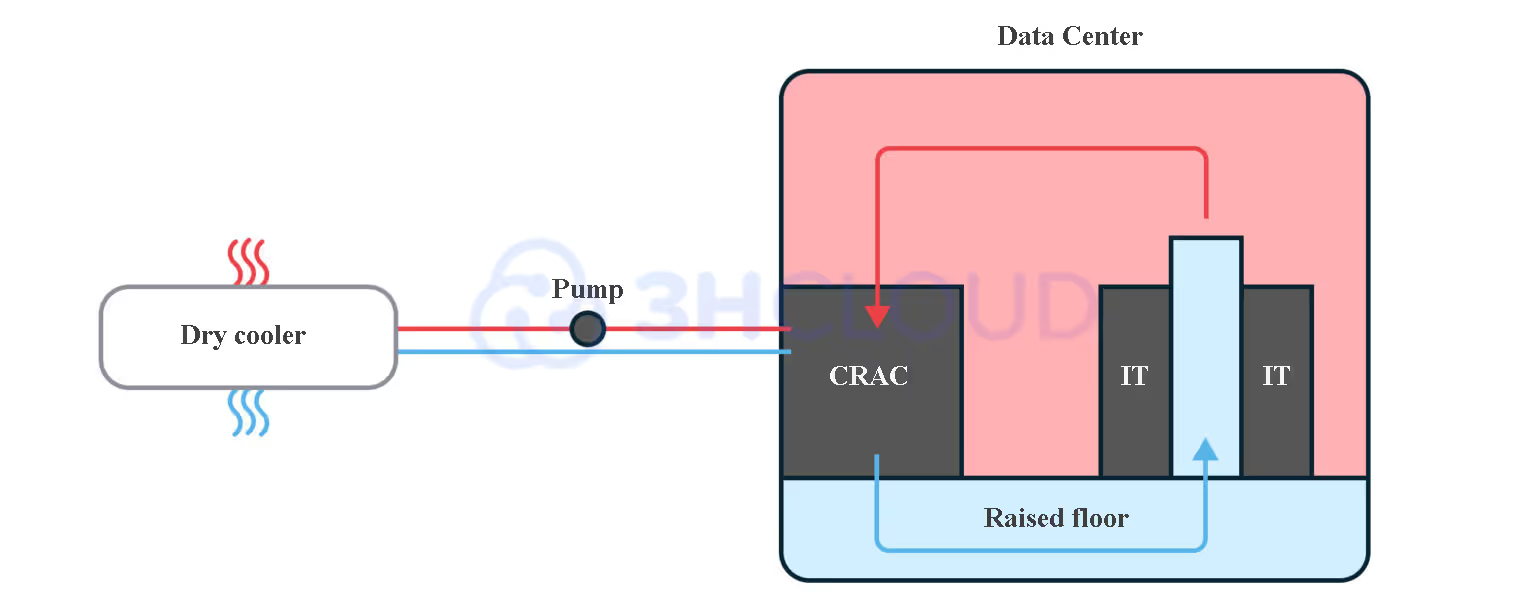

The difference between the chiller system and classical freon air conditioners is that a non-freezing glycol solution circulates in the pipelines between the internal and external units. It remains liquid all the time and does not turn into a gaseous state.

The solution is pumped through a cooling system in the server room, where it absorbs heat from the hot radiators (which might additionally have a compressor unit), and from there, it is extracted to an external heat exchanger (chiller) outside. In the chiller, it is cooled using outdoor air and an additional compressor-condenser unit. Then, the now-cooled glycol solution is fed back into the conditioner inside the data center's server space.

The energy efficiency of the chiller circuit is increased due to the fact that the external compressor-condenser unit of the chiller. It is activated only at a temperature above 12 ° C, and the fans of the external unit are started gradually, as the temperature of the glycol coolant increases.

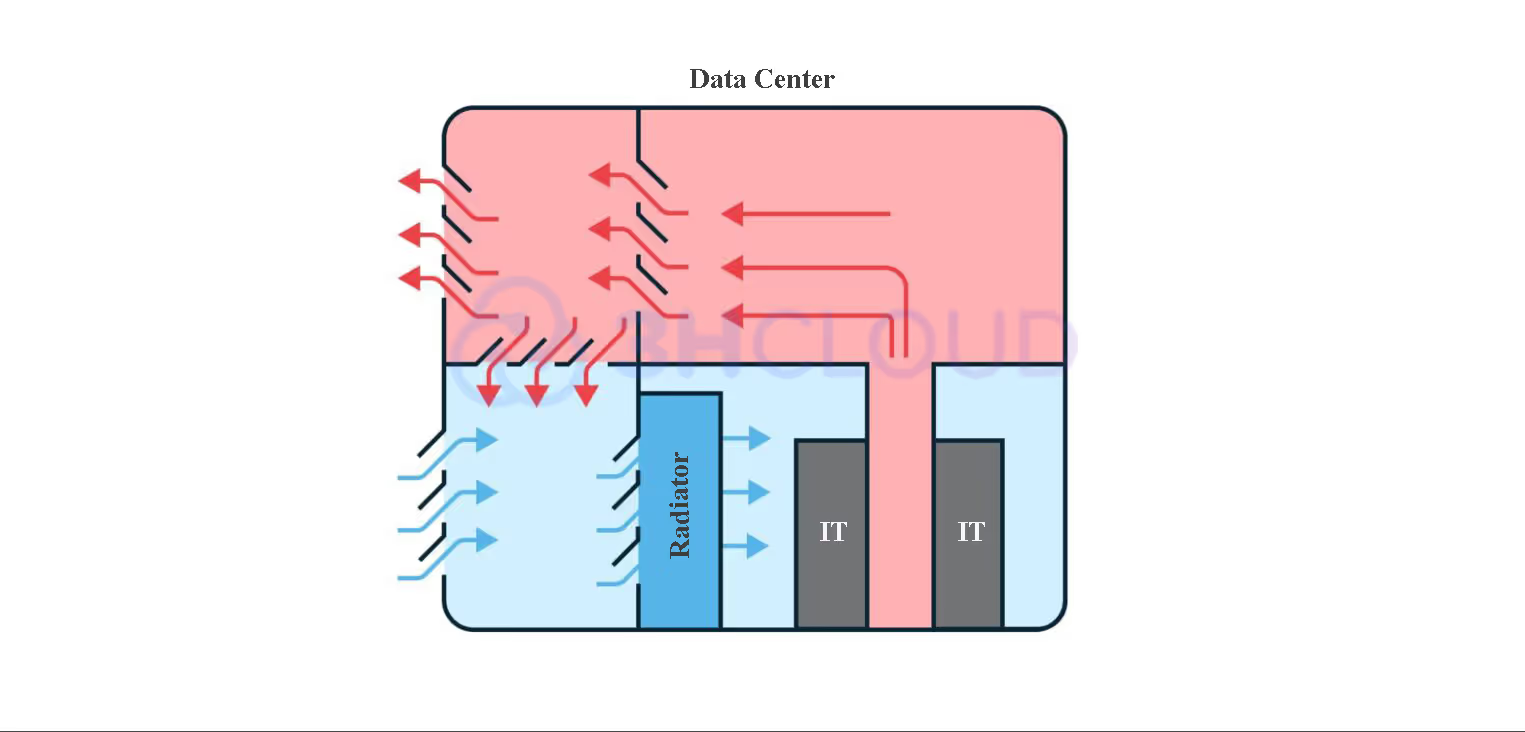

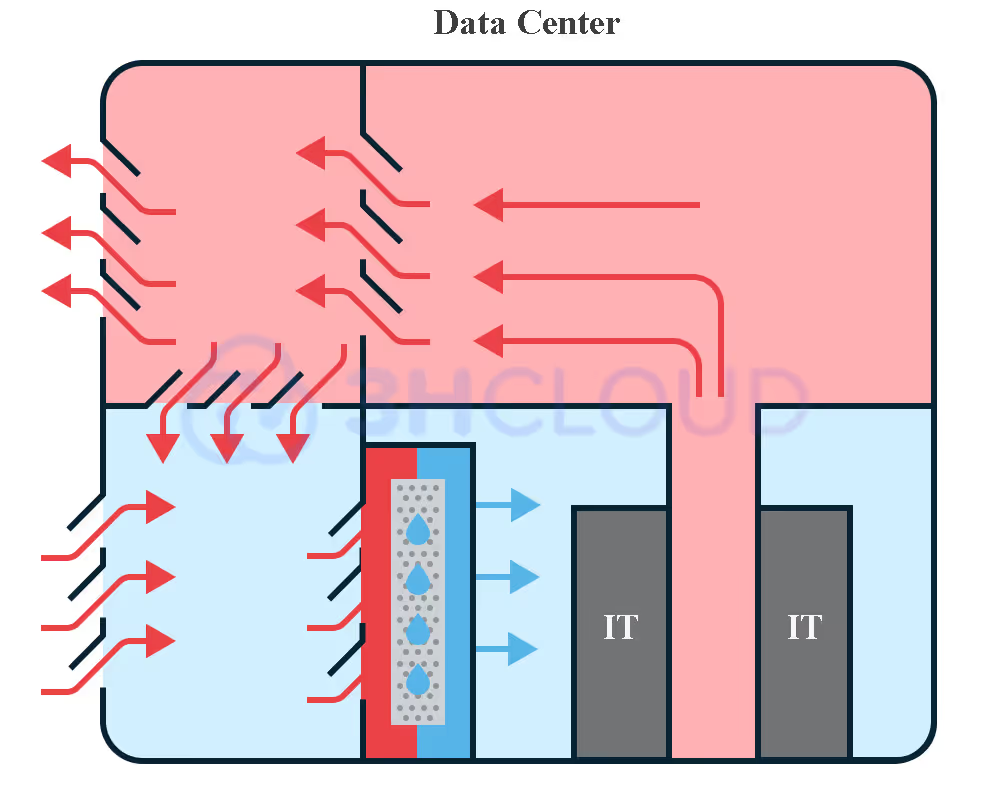

Free Cooling and ARF

The next step is switching to a direct free cooling scheme with additional cooling. It consists of the rejection of heat exchangers, where the hot air from the data center is cooled. Servers and network equipment are cooled by blowing with the help of outboard air passing through additional cooling radiators filled with cold water in the free cooling scheme.

The outboard air passes through filters, where it is cleaned of dust, and then enters the engine room. Cold air in winter mixes with warm air in the mixing chamber. It helps to make a constant blowing temperature.

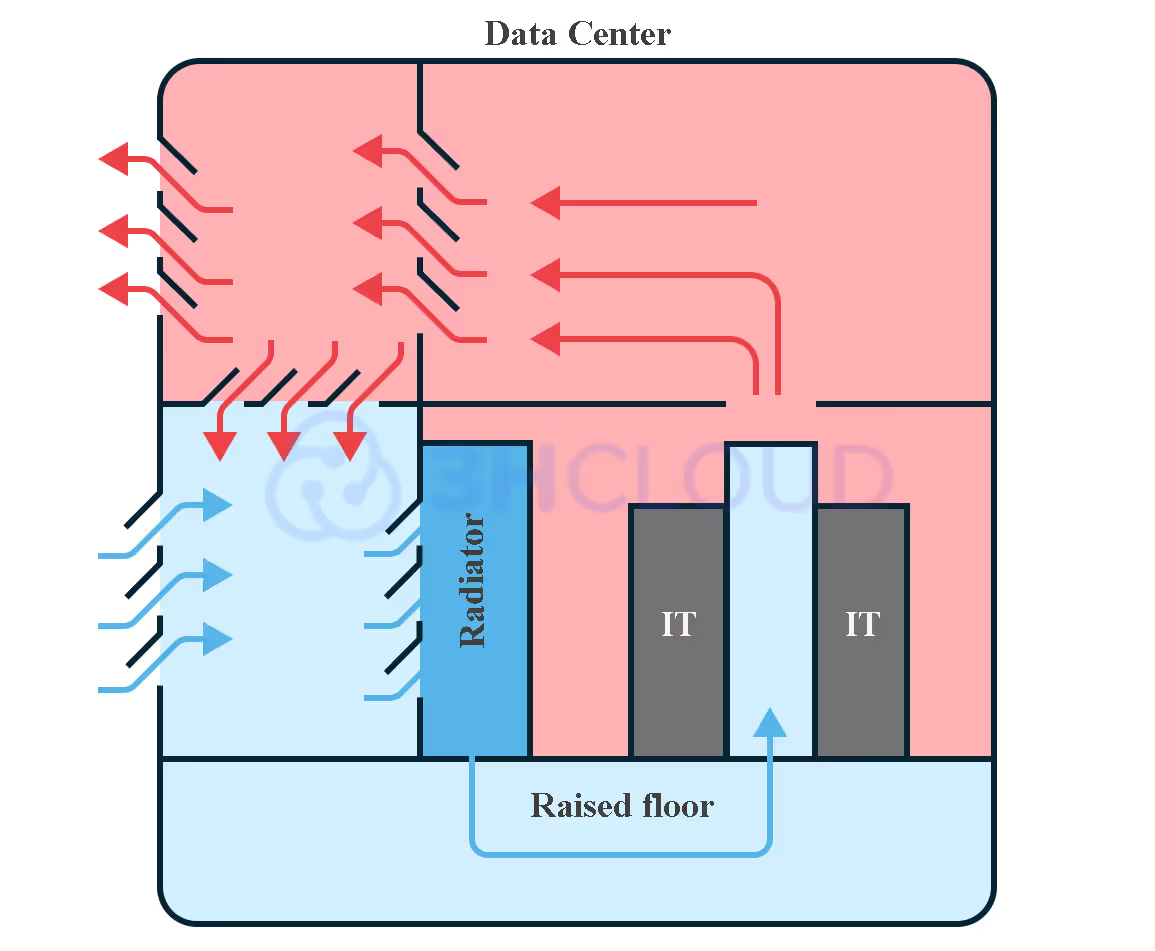

We use direct free cooling with an additional cooling unit. This includes an absorption refrigeration machine (ARF). ARF is really necessary in case of summer heat. The outboard air passes through the filters of the ARF heat exchanger. The air is supplied under the raised floor in the cold corridors of the server room.

ARF is primarily activated in summer or when the air temperature consistently exceeds 21° C. This is an energy-efficient solution that has allowed us to achieve a PUE of ~1.25. Moreover, ARF is an environmentally safe installation, because it works on water without the use of freon.

The ventilation system also maintains a pressure difference between the data center and the external environment. There is practically no dust in the air: even if it gets into the server room, it is immediately pushed out.

The maintenance costs of such a system are higher than in the case of conventional cooling. However, the use of a system with ARF allows you to save money by reducing the consumption of electricity for heating the air and its postcooling.

Free Cooling and Adiabatic Post Cooling system

Similarly to ARF, the adiabatic cooling system is employed during summer or whenever the air temperature becomes excessively warm. The air is pre-cooled by passing through liquid filters.

Other Energy Efficiency Practices

We have already realized that the environmental friendliness of the data center is associated not only with heat emissions but also with electricity consumption. Therefore, let's consider practices for its reduction.

Monitoring Of Energy Supply

The correct use of the capacities that the virtualization data center has could help to increase energy efficiency. Our servers reduce power consumption themselves in the absence of load and default settings. It is important that the specific power management settings for energy efficiency are correct.

Even a server with a minimal configuration will consume from 100 watts. A more powerful unit will need about 600 watts. It is necessary to organize the infrastructure elements and load monitoring to track the efficiency.

Special DCIM (Data Center Infrastructure Management) systems can be used for monitoring, complemented by support from the engineering and technical department.

Dynamic UPS Instead Of Static

Ensuring uninterrupted power supply in server rooms is a key responsibility of data centers towards their customers. The efficiency of these power supplies, especially in terms of resource consumption, is also crucial.

Classic static UPS (with batteries) provides the equipment with a power supply for 5-30 minutes during a power outage. This duration generally allows sufficient time to switch over to diesel generator sets (DGS).

While static UPS systems reserve critical load power, they have several disadvantages compared to dynamic UPS systems. The main disadvantages are the following:

- the occupied space;

- the narrow temperature range of operation;

- the need to replace and dispose of batteries.

The principle of operation of dynamic UPS is the accumulation of kinetic energy of the rotating flywheel. They generate electricity at the moment of power outage, due to the inertia of the rotation of the flywheel. The main disadvantage of dynamic UPS is the limited backup time: they can support the operation of equipment for about 30 seconds at full load. However, this is typically adequate to initiate the connection to the DGS.

The provider does not need batteries in dynamic UPS. The free space can be used, for example, to accommodate server racks.

Smart Lighting

The total power consumption of the data center can be reduced by replacing the lighting systems. Smart lighting systems work on a simple principle: the light turns on only when a person appears in the server room. The rest of the time, only emergency lighting works.

Why Data Centers Are Essential Beyond Just Business

PC power consumption is enhanced by the fact that people purchase powerful hardware. But there is a problem: not every PC owner cares about the energy efficiency of his device, which can consume several kWh per day.

Many people turn to data centers to reduce their financial costs after seeing an electricity bill.

This means that in the future it may be more profitable, more energy efficient, and more environmentally friendly to use the virtual resources of a cloud provider. Virtualization makes it possible to redistribute and increase processor power, RAM, and memory as needed. Therefore, electricity is spent only on providing work, excluding the cost of idle equipment.

So, the capital costs of users are transferred to operational ones, electricity is consumed more efficiently, the provider takes care of the utilization of resources and emissions, and the world becomes greener.

Additional Strategies for Environmental Sustainability

It is impossible to achieve absolute energy efficiency. We monitor the PUE coefficient and try to use more energy-efficient solutions for cooling, lighting, and other aspects of work.

Moreover, we utilize batteries, ethylene glycol from cooling systems, and even waste paper correctly. Everything that can be repaired is repaired. Try to use the features to decrease your influence on nature too.