Multi-Region DR: Redundancy That Is Never Excessive

Over the past 10 years, multi-region setups have been considered a best practice, but only large corporations were willing to allocate budgets for such redundancy. And while incidents with submarine cables in the Red Sea could still be perceived as random, the events of March 2026 elevate infrastructure risks to an entirely new level.

Operator error or hardware failure are no longer the most threats to disaster recovery. Suddenly, physical geography and geopolitics have come to the forefront. Infrastructure doesn’t exist in a vacuum, and now even a data center in a stable region can become unavailable within 72 hours. This is a new reality that infrastructure architects must take into account.

The Fragility Paradox

The Internet is remarkably resilient. This is not surprising - its predecessor, the military network ARPANET, was originally designed to continue functioning even after significant parts of its infrastructure were destroyed.

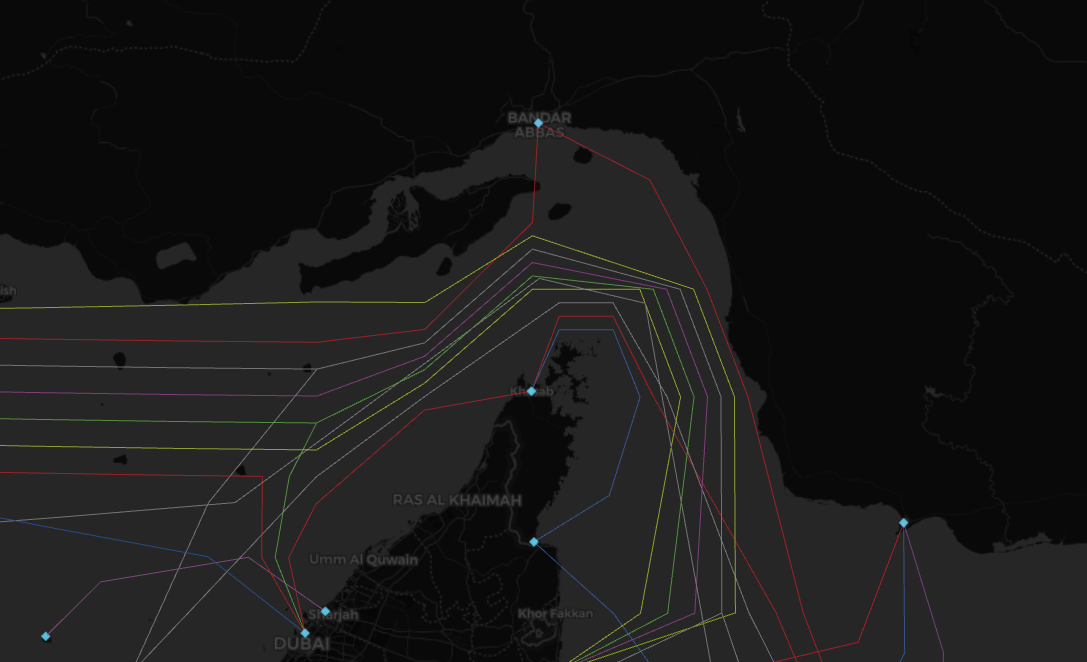

The “circulatory system” of the Internet consists of submarine backbone cables. They carry 95 percent of international traffic. And it is precisely these cables that are most often associated with the largest incidents in the history of global telecommunications. Despite their robustness, up to 200 cable breaks are recorded each year.

Some of these are, of course, pure accidents or natural disasters. In 2022, a volcanic eruption completely destroyed the submarine cable connecting the island Kingdom of Tonga to the outside world. An entire country of 110,000 people was cut off from the global Internet.

In addition to natural causes, cables also fail due to deliberate actions. In 2024, cables in the Baltic Sea connecting Finland with Western Europe (C-Lion 1) and Sweden with Lithuania (BCS East-West Interlink) were damaged almost simultaneously. Fortunately, these incidents had virtually no impact on users - the redundancy of the European network infrastructure helped.

That same year saw an even more illustrative case. The sinking vessel Rubymar in the Red Sea dragged its anchor, which easily tore through three major submarine cables. Nearly 90 percent of traffic between Europe and Asia simply disappeared. Due to the inability of repair ships to enter the conflict zone, restoration took months.

September 2025 - again the Red Sea. Four major backbones were cut simultaneously causing immediate large-scale degradation of international connectivity. Yes, all affected providers switched to backup routes, and the system coped. Microsoft Azure informed customers that traffic passing through the Middle East would experience increased latency.

This is only a small subset of incidents that clearly demonstrated to the industry how easily connectivity can be disrupted, causing problems for both businesses and end users.

Force Majeure as the Norm

Nearly a quarter of global traffic passes through 15 submarine cables (out of 17 in the Red Sea), located close to each other in a narrow 30-kilometer corridor of the Bab-el-Mandeb Strait. This area remains a zone of heightened military risk. AWS data centers in the UAE and Bahrain were attacked, resulting in catastrophic consequences including fires, power disruptions, and physical damage to equipment.

Suddenly, it became clear that data centers must be protected not only from natural disasters and cyber threads. They have become potential targets requiring security at the state level. Gulf countries have spent billions of dollars building resilient computing infrastructure to support artificial intelligence, only to find that it was not designed to withstand deliberate destruction within a single region.

The culmination of the situation was a public statement by AWS. It explicitly recommended that customers migrate their workloads to other regions. Quoting directly from the dashboard:

“We continue to strongly recommend that customers with workloads running in the Middle East take action now to migrate those workloads to alternate AWS Regions. Customers should enact their disaster recovery plans, recover from remote backups stored in other Regions, and update their applications to direct traffic away from the affected Regions.”

Availability Zones No Longer Work

If we look at traditional highly available cloud architecture, most major providers divide infrastructure into regions, each containing several Availability Zones (AZ). Each AZ may consist of one or more physical data centers, but together they represent a single failure domain.

For the customer, only the AZ is visible; everything below that level is the provider’s internal concern. Therefore, the classical Multi-AZ approach has worked in most cases. Database replication, load balancing, and distributed queues are spread across AZs, covering most incidents - from hardware failures to scheduled maintenance.

However, this model has a non-obvious nuance. For synchronous replication between data centers within an AZ, they must be relatively close to each other. Every additional 100 kilometers introduces roughly 1 ms of latency (2 ms round-trip). This is, of course, a very rough estimate. Increased latency can make such replication impractical. In reality, one might assume that all three AZs of a region like ME-CENTRAL-1 are located within the Dubai metropolitan area. AWS does not disclose exact locations, although this did not prevent Iranian intelligence from identifying them.

As a result, the weak point turned out to be that the system was designed to withstand the failure of a single AZ, while the attack affected two out of three. Instead of independent, albeit significant failures, the architecture suffered a coordinated strike on a single geographic location.

What Should Architects Do Under These Conditions

The events of March clearly demonstrate that it is time to revisit the threat model and remove the implicit assumption that a region is a sufficiently stable unit of isolation. But before moving to design, one must answer an uncomfortable question: how much does an hour of downtime cost the business? Is the company prepared to be down for several hours or even days?

The answer determines the DR strategy. Based on the four standard AWS strategies, the following table can be constructed:

Let’s be honest - most companies do not need Active-Active. However, if a region becomes a possible failure domain, Backup & Restore is no longer sufficient.

Choosing Regions

When designing multi-region infrastructure, special attention must be paid to their independence - not only geographically. They must not share bottlenecks and should be connected via different physical backbone routes. It is also important that regions belong to different jurisdictions and do not share the same political perimeter.

For example, in AWS, the pair ME-CENTRAL-1 and ME-SOUTH-1 was not a reasonable choice in March 2026. Formally, they are different regions, but from a business resilience perspective, both are highly interdependent. A shared political environment and limited transit routes mean that these regions may behave not as two independent failure domains, but as one.

The conclusion is simple: multi-region DR should not be designed between the closest regions, but between the least interconnected ones that still meet business requirements. However, even a multi-region setup may still have shared dependencies.

Failures can occur not only at the compute level but also in DNS, payment gateway, office connectivity, and other services. If these remain single points of failure, a second region will not help - it will only create an expensive illusion of redundancy. Moreover, replicating data to another region does not guarantee recovery.

From Abstraction to Practice

Ask yourself: what state will the system be in after failover? Will the application start? Will it retrieve current secrets from the vault? Will integrations break? Will queues be available? Will events be duplicated en masse?

Instead of the abstract “Do we have DR?”, the discussion becomes a set of concrete questions:

- What failure scenarios do we consider realistic?

- Which components must survive regional loss?

- What is switched manually, and what is automated?

- Who decided when to trigger a failover?

- How do we prevent split-brain and data inconsistency?

- What is the process for failing back after recovery?

Answering these questions makes it clear that the secondary region must not merely store backups - it must be a prepared environment with a minimal viable set of services and a tested failover mechanism. This level of maturity allows most companies to survive regional loss without significant budget impact.

It is also important to define which data can be lost and which cannot. For stateful services, there is little room for cost-saving. Achieving near-zero RPO is a non-trivial engineering challenge that directly impacts performance, cost and operational complexity.

Testing and Failure Simulation as Part of Design

At sea, crews regularly conduct drills simulating emergency situations. The same applies here: DR architecture must not exist only on paper. The question is not “Can we fail over?” but “When was the last time we actually did it?”

If failover has never been executed, this is a bad sign. All RTO and RPO estimates remain purely theoretical - you don’t know how the system will behave in reality.

Practical readiness must include not only periodic drills simulating total regional failure but also measuring actual RTO/RPO values. Additionally, there must be dedicated procedures for returning to the primary region after recovery.

Conclusion

It is better to learn from others’ mistakes. In March 2026, we saw a clear example of a scenario that could have been predicted, but whose probability was underestimated. A region is no longer a guaranteed failure boundary, and multi-region DR is becoming a baseline architectural requirement.

Fundamentally, nothing has changed - this isn’t a call to rush into building Active-Active systems with near-zero failover. However, for businesses that cannot tolerate prolonged downtime, there is a practical recommendation: consider moving to the next level of DR by distributing it across multiple regions.

In a critical moment, you will know for certain that your backup region is not theoretical - it is ready to take over the workload within an acceptable timeframe. And this is precisely the situation that will demonstrate to the business why additional resources are spent on redundant infrastructure.